I work on perception and control for fast-paced off-road ground vehicle autonomy at Overland AI since February 2024.

I finished a post-doc with Matthias Müller and Vladlen Koltun in April 2022, followed by a Research Scientist role, at Intel Labs. I worked on policy learning for contact-rich robot manipulation and navigation, and simulation for embodied agents.

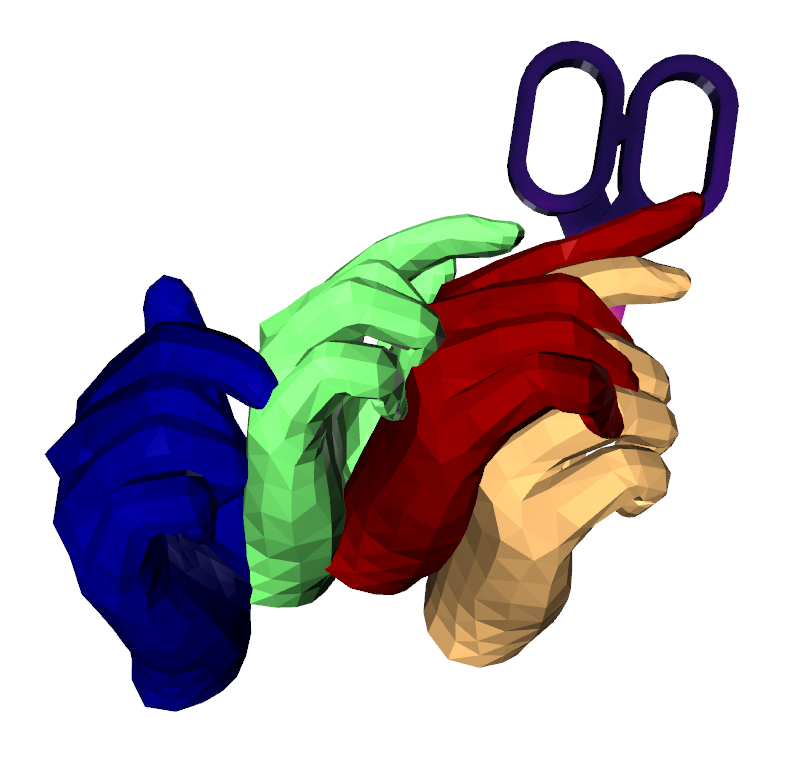

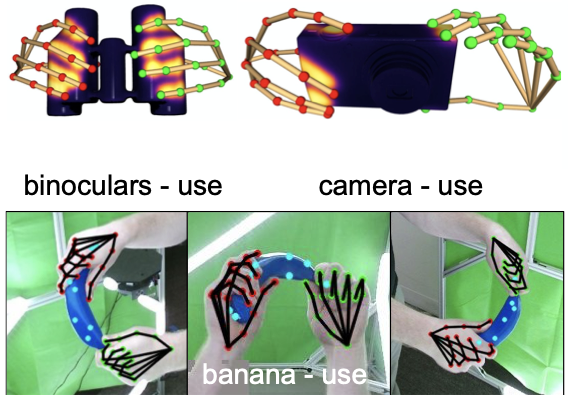

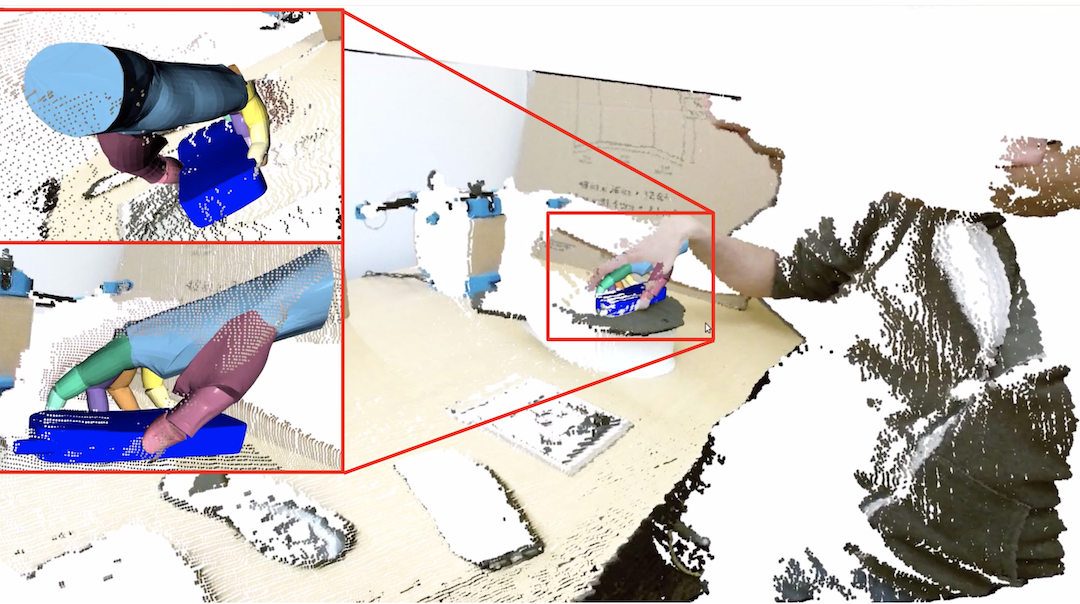

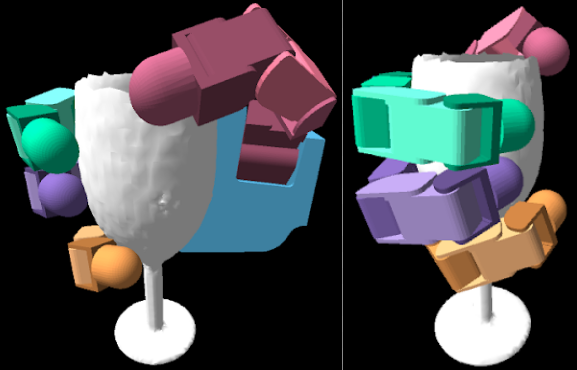

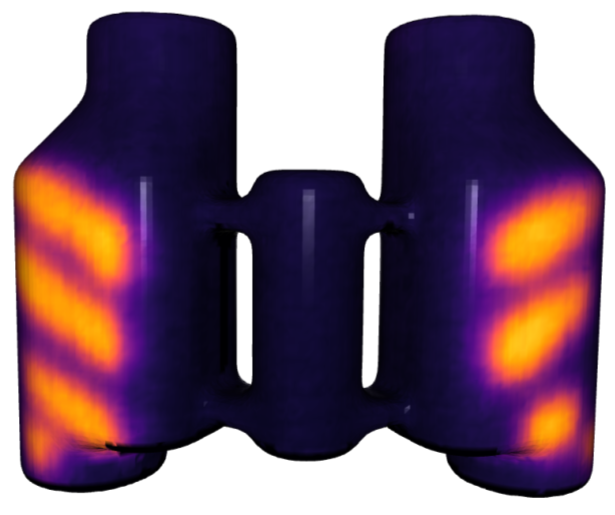

My PhD work in Robotics at Georgia Tech was advised by James Hays. I also worked closely with Charlie Kemp. My PhD thesis was on observing and predicting hand-object interaction during human grasping, especially from the contact perspective. I have a Masters degree in Robotics from the University of Pennsylvania, where my thesis was advised by Kostas Daniilidis, and a bachelor's degree in electronics from Nirma University, India.

Research

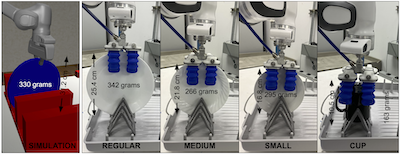

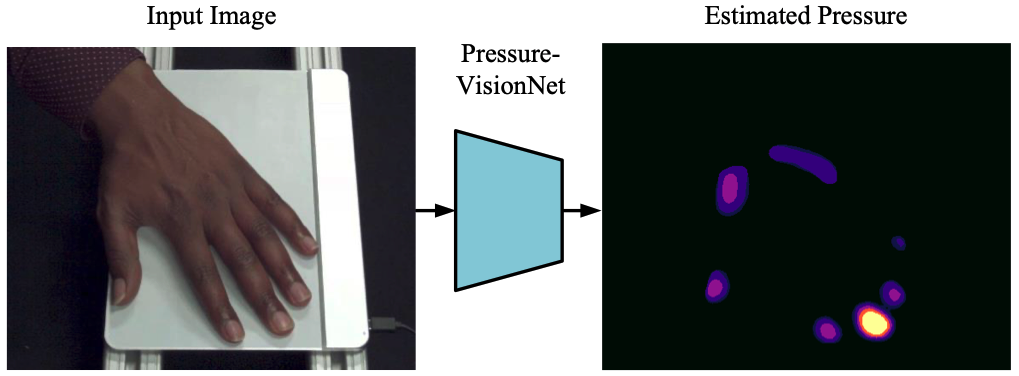

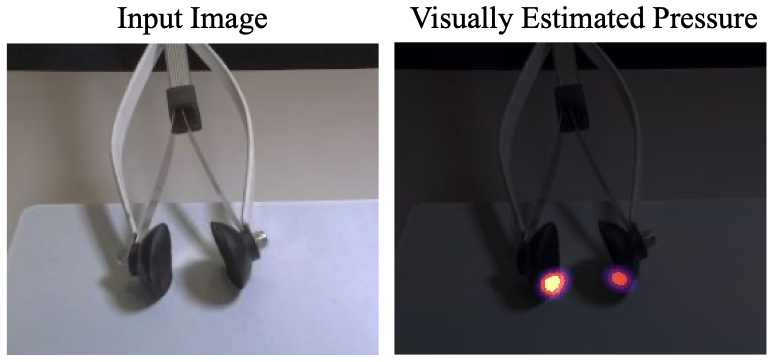

Visual Pressure Estimation and Control for Soft Robotic Grippers IROS '22

Model and dataset for predicting continuous robot gripper contact with planar surfaces from a single RGB image.

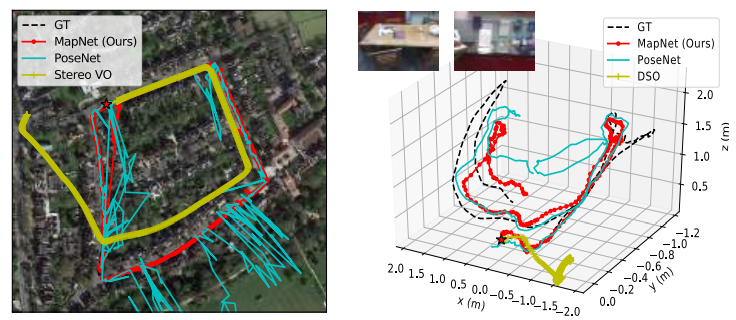

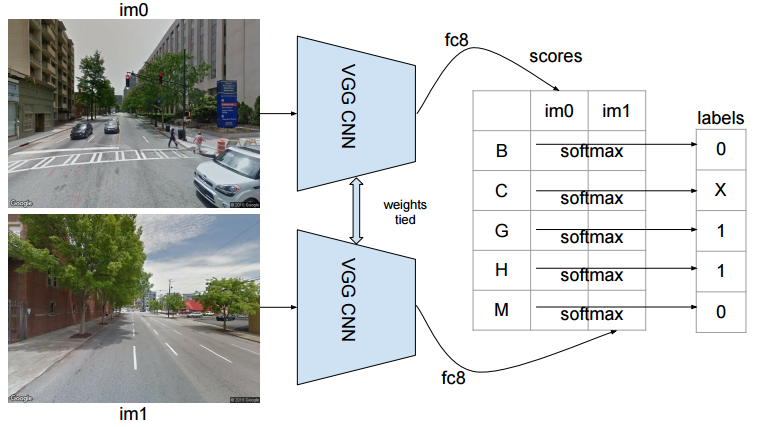

DeepNav: Learning to Navigate Large Cities CVPR 2017

Learning to navigate large cities by training convolutional neural networks to make a navigation decision from the current street-view image.

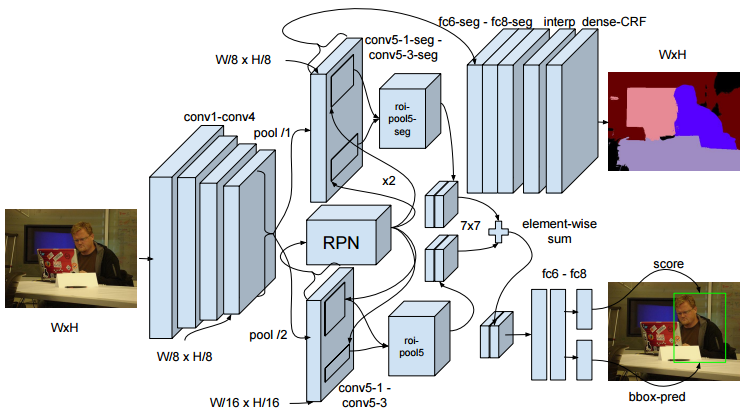

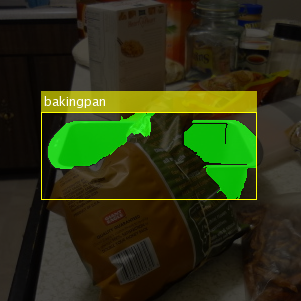

Masters' Thesis UPenn, Fall 2014

Detection and segmentation of partially occluded objects.

3D Pose Estimation Summer 2013 / ICRA 2014

We made GRASPY, Penn's PR2 robot detect and estimate the 6-DOF pose of household objects, all from one 2D image.

RoboCup Kid Size League Summer 2013

Our Team DARwIN won the Humanoid Kid Size League world championships.

Kinect-based Object Segmentation for Grasping Summer 2011

We used a Kinect to segment spherical and cylindrical objects lying on a table, so that a robotic arm could be guided to their 3D position.

Practical OpenCV 2013, book with more than 82 K downloads

A detailed C++ guide for OpenCV through practical projects.