Learning in Robotics - ESE 650

Color image panorama using orientation tracking

For this project, images were recoreded using a camera mounted on a plate with an IMU. The challenge was to organize the images into a panorama using Unscented Kalman Filter based orientation tracking.

Simultaneous Localization and Mapping using a particle filter

This project used LIDAR and wheel odometry data recorded using a mobile robot to create a planar map of the path followed by the robot and simultaneously localized the robot in that map. After the map was constructed, RANSAC was used to extract the ground plane from Kinect disparity data and the ground plane pixels were overlaid on the SLAM planar map.

Point Cloud registration for 3D mapping

In this project I implemented an efficient 3D point-cloud registration pipeline based on 3D feature matching using the Point Cloud Library that could handle large differences in position and orientation between the two point clouds.

Path planning for a robotic vehicle using imitation learning

In this project I used imitation learning to learn a cost map on an aerial photograph for a robotic car travelling through a city and used the A* algorithm to plan the path for the car.

Probabilistic color segmentation of images

In this project I trained Gaussian Mixture Models to segment a red barrel out of color images under different illumination conditions.

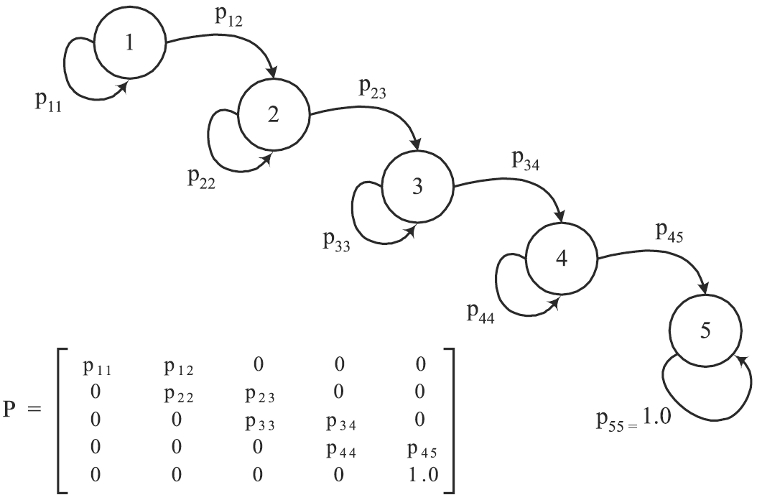

Gesture recognition using Hidden Markov Models

In this project I recognized gestures from among a collection of 6 gestures using IMU data with the help of Hidden Markov Models. I first clustered the 6 channel IMU data into 20 clusters and then trained a different HMM for every gesture, using cross-validation for determining the number of hidden states.